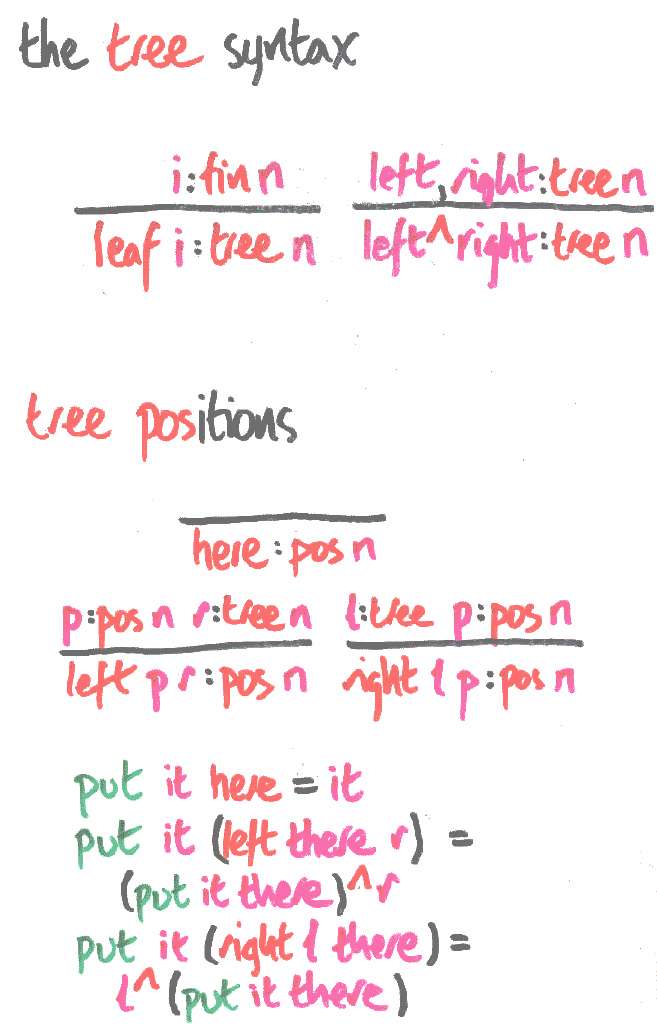

This tree syntax, viewing the leaves as variables indexed by finite sets, seems a convenient first-order starting point. This talk develops a unification algorithm for these trees. Perhaps I should have called leaf `var' or `flex' to make this a bit more explicit. Truth to tell, I arrived at these trees from ML-style function types with polymorphic type variables, but there are enough types around at the meta-level in this talk, so I thought better of talking about types at the object-level too.

A position is a path from the root of a tree to a deleted subtree, recording the subtrees passed on the way. It's a one-hole context, and the put function puts a tree in the hole. These things are a bit like Huet's `zippers', but he gives the path from the deleted subtree back to the root---a more flexible presentation in general, but slightly more awkward for the work in this talk.

Positions form a monoid with here as the unit and the obvious `append' composition. put gives this monoid an action on trees. It's not hard to show that here is the only position that can behave like the identity, ie that the trees contain no cycles (one way: do structural induction on the tree), then cases on the position).

Note that each tree type is labelled by the number of distinct variables which may appear in its inhabitants. Because the syntax is first-order, this label is essentially parametric for the datatype. For any given tree, it stays constant throughout. Once syntax involves binding, eg untyped lambda terms...

t : Lam sn

--------------------------------------

lam t : Lam n

s, t : Lam n

----------------------------------------

app s t : Lam n

...the number of variables becomes an index, and dependent types begin to bite.

This parameter/index distinction (I think they're Dybjer's names) is the same as that between flat ML-style polymorphism (think list) and `nested types'. I could have defined the trees flat-polymorphically over a type of identifiers. Richard Bird (and others) define lambda terms in the nested type style, with

Nested types allow dependent families of types whose indices are other types. This is a sensible and principled way to add dependent types to functional programming languages with general recursion, because it maintains the separation of terms (which are nasty) from types (which are nice).

However, in a strongly normalising type theory, terms are even nicer than types, because we can do computation on them safely. Does this give us more programs over essentially the same data structures? Or at least more easily verifiable programs?

Next: mapping the leaves | Prev: through thick and thin | Up: contents