So here's the unification algorithm. It takes (a `source' number of variables n and) two terms (with at most n variables). The return type is made with option to allow for the possibility of failure. A successful return is, in fact, a pair: the `target' number of variables and a source-to-target substitution. Since the former is inferrable from the latter, I casually omit it.

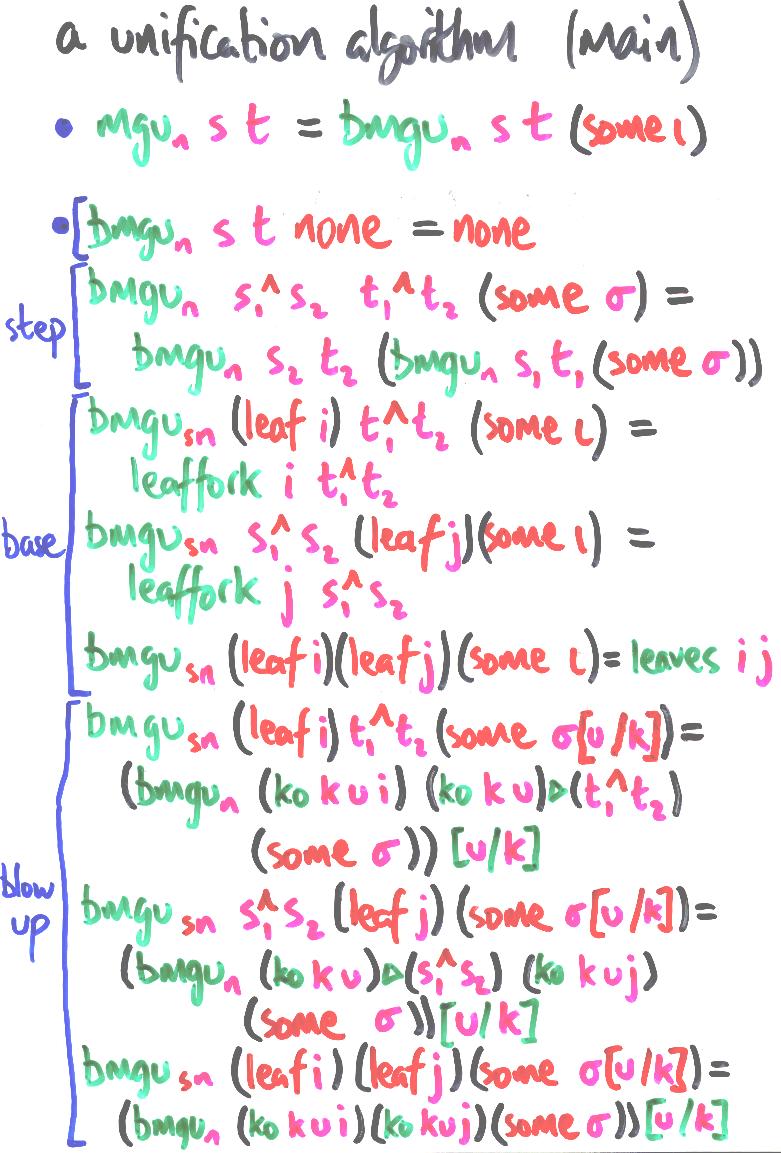

Of course, the main function just calls its bounded cousin with the most optimistic guess it can think of.

I've grouped the lines of bmgu behaviourally as follows:

Now, the fourth of these is apparently non-structural, in that it blows up the terms. Aha, but it is structural! It decreases the source number of variables! The nested recursion structure goes as follows (outer to inner):

Do I really need the induction on the source number of variables? I don't believe I can get away with just an outer structural recursion on the accumulator. Think what happens to the rigid-rigid case when the accumulator is iota: I can only make recursive calls with accumulator iota, so I can't pass the accumulator from one branch to the other. Induction on the source number allows me any substitution descending from that set of variables.

This is a key question if we are to understand the status of dependently typed programming, relative to programming with, say, nested types. In this program, I make computational use of type indices: I exploit directly the dependency of types on terms. If this index recursion is critical (and I suspect it is), then this program shows that terms-in-types really buys us something.

Certainly, simply-typed versions require general recursion. There's plenty of technology supporting proofs of such programs these days, including neat separation of partial correctness from termination. There is still an overhead in attaching an appropriate well-founded ordering externally and proving that it is respected by the program. I get termination for free, and I get a bona fide LEGO term which provably computes most general unifiers, with no need of propositional jiggery-pokery to sustain its recursive behaviour.

Next: base cases | Prev: embeddings | Up: contents