Instead of writing thick directly, I can prove an elimination rule for fin sn which, for any given w performs case analysis between `patterns' w and (thin w j). If this rule eliminates over datatypes, I can use it to write programs. In particular, the program for thick moves a great deal closer to its specification.

Extending this idea, I can prove an elimination rule for trees which internalises the search involved in check. The patterns (put (leaf w) there) and ((thin w) |> t) are certainly exhaustive and disjoint. The problem is that a tree containing several (leaf w)'s gives rise to an ambiguous match for there. We may get different interpretations depending on whether we want, say

This suggestion bears a strong resemblance to Phil Wadler's neat idea of allowing different `views' of a datatype. He gives various examples, eg viewing cons-lists as snoc-lists, all of which are expressible as nonstandard elimination rules for datatypes. I go further in two ways:

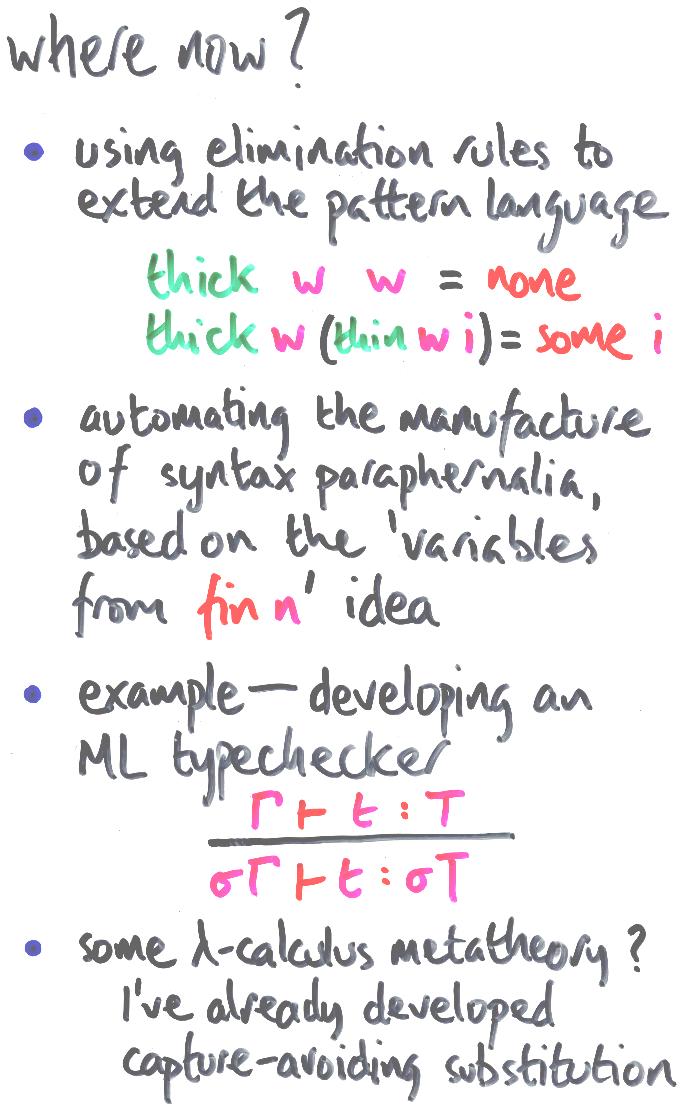

The use of elimination rules to extend the pattern language takes the spirit of the idea, but ensures correctness. The proof of the new rule (by established elimination rules such as structural induction) effectively tells the compiler how to unwind the view in terms of computational mechanisms already supported.

There seems great strength and no loss of principle in the idea that a compiler should be able to exploit extensions justified by theorem proving.

I speak not without some experience here. Along with a selection of genetic disorders, I inherited Fred McBride's 1970 implementation of LISP with pattern matching. Patterns consisted of atomic constants, atomic variables and cons of patterns, and may be elaborated with side-conditions: nonlinear patterns are interpreted via the decidable intensional equality on s-expressions (`EQUAL' to lispers).

In 1989, I extended this pattern language to admit the `append' symbol. (Note, list is its own position type and append is the put-function.) Parsers for simple grammars follow the productions transparently, line by line. The interpreter also ran internalised search a lot faster than explicitly recursive search, although it is hard to tell whether this speedup would survive compilation.

I'm fed up with constructor patterns. I want the programs I had when I was a kid, and I hope I can get them without general recursion. Except for the six line Turing machine simulator, of course.

Moving on, it's fairly clear that much of the syntax manipulation equipment I built for trees generalises. Indeed, it's not that hard to add binding. Maybe it's worth automating its construction.

There are three examples I have in mind for further study:

And that's that. There's a lot more in this commentary than there was in any instance of my talk. More detail in my thesis...

Up: contents | Prev: conclusions